Why AI interview questions are different

Most "interview question" lists you find online were written for human interviewers running 45-minute synchronous calls. AI voice interviews work differently — they're typically 5–10 minutes, structured around a fixed rubric, and run unattended at any hour. Question design has to adapt.

A question that works well in an AI voice interview has four characteristics:

- Elicits a narrative response. The candidate's tone, structure, and depth of answer carry as much signal as the literal content.

- Maps to a named rubric criterion. Vague questions produce vague scoring. Each question should have a specific criterion attached.

- Supports a defined follow-up. AI can ask a clarifying question if the initial answer is thin — but only if you've defined what "thin" looks like.

- Avoids requiring visual artifacts. No whiteboarding, no shared screens, no diagrams. Voice-only.

This guide gives you copy-paste templates by role — engineering, sales, customer support, and product — plus the rubric criterion each question maps to and the follow-up to use if the initial answer needs more depth. For the broader regulatory frame (EU AI Act, NYC LL144, GDPR Article 22), see our compliance content cluster.

The anatomy of a good AI interview question

Here's a complete template, dissected:

Q: "Walk me through a recent project where you had to debug a production issue. What was the problem, what did you try first, and what actually worked?"

What makes this question work for AI screening:

- Specific, recent, concrete. "A recent project" anchors the response in real experience rather than hypotheticals. Hypotheticals are easy to bullshit; concrete examples are not.

- Three explicit sub-questions. "What was the problem" → "what you tried first" → "what actually worked." Gives the candidate a structure to answer, gives the AI a structure to score against.

- Named rubric mapping. Engineering rubric → "ownership and debugging methodology." Communication rubric → "ability to structure a technical narrative under time pressure."

- Defined follow-up branch. If the candidate gives a generic answer ("I check the logs"), the follow-up is: "Can you walk me through one specific instance — what the logs showed, and what your next step was?"

The full structure for any AI interview question:

Question: <the prompt>

Rubric criterion: <what you're scoring>

What good looks like: <a sentence describing the strong answer pattern>

Follow-up trigger: <when to probe>

Follow-up question: <the probe>

The four question banks below follow this pattern.

Engineering interview questions

For mid-level individual contributor roles. Adapt the seniority by changing what depth you expect in the answer.

| Question | What you're listening for | Follow-up if answer is thin |

|---|---|---|

| Walk me through a recent project where you had to debug a production issue. What was the problem, what did you try first, and what actually worked? | Ownership, debugging methodology, learning from failure | "Can you walk me through one specific instance — what the logs showed, and what your next step was?" |

| Tell me about a time you disagreed with a teammate's technical approach. How did you handle it? | Collaboration, conflict resolution, technical communication | "How did you decide it was worth pushing back versus just going along?" |

| Describe a piece of code or system you shipped that you're proud of, and one you'd change if you could go back. | Code quality awareness, growth mindset, retrospection | "What would you change about it specifically, and why didn't you do it that way originally?" |

| Walk me through how you'd approach a system that needs to handle 10× its current load. | Systems thinking, capacity planning, willingness to ask clarifying questions | "What would you measure first to know whether your changes worked?" |

| Tell me about a time you had to learn a new technology or framework on a tight deadline. | Adaptability, learning approach, time-to-productivity | "How did you decide what to skip in the documentation and what to read carefully?" |

Pitfalls specific to engineering interviews: avoid trivia questions ("what's the time complexity of quicksort?"), live-coding requests (no shared editor in voice), and questions that depend on the candidate having worked in your specific stack.

Sales and GTM interview questions

For account executive, business development, and sales development roles.

| Question | What you're listening for | Follow-up if answer is thin |

|---|---|---|

| Walk me through your most recent closed-won deal. What was the customer's biggest objection and how did you address it? | Deal mechanics, objection handling, customer empathy | "What was your champion most worried about, and how did you arm them?" |

| Tell me about a deal you lost. What did you learn from it? | Self-awareness, retrospection, willingness to own failure | "Looking back, what's the earliest signal you missed that this would lose?" |

| Describe a time you had to qualify out of a deal that looked promising. How did you make the call? | Discipline, qualification rigor, time-management | "What was your manager's reaction when you told them?" |

| How do you handle a champion who goes silent for two weeks after a strong demo? | Persistence, multi-threading, customer communication style | "What's your specific cadence — how many touches over what timeframe?" |

| Tell me about the most complex stakeholder map you've navigated. How did you build consensus? | Enterprise selling skills, stakeholder mapping, patience | "Who was the hardest person to convert, and what changed their mind?" |

Pitfalls specific to sales interviews: avoid quota-recital questions ("did you hit quota?"), product-pitch requests (you're not testing if they can pitch your product yet), and trick "sell me this pen" questions that don't predict actual deal performance.

Customer support and operations interview questions

For support specialists, customer success managers, and ops roles.

| Question | What you're listening for | Follow-up if answer is thin |

|---|---|---|

| Walk me through a recent escalation you handled. What was the customer's emotional state and how did you de-escalate? | Empathy, calm under pressure, communication clarity | "What did you say in the first 30 seconds of that call, and why?" |

| Tell me about a time you had to deliver bad news to a customer. How did you frame it? | Honesty, professional empathy, ownership | "How did the customer react, and what did you do next?" |

| Describe a process improvement you suggested or implemented in your last role. | Initiative, systems thinking, willingness to push for change | "Who pushed back, and how did you handle that pushback?" |

| How do you decide what to prioritize when your queue is overloaded? | Triage skills, judgment, ability to articulate a framework | "Walk me through the last time your queue actually overflowed — what specifically did you do?" |

| Tell me about a customer interaction that taught you something about your product or company. | Curiosity, learning orientation, customer-centric mindset | "What did you do with that information after the call?" |

Pitfalls specific to support interviews: avoid scripted role-plays (they test acting, not real responses), questions that reward memorized "perfect customer service" templates, and questions about hypothetical perfect-customer scenarios.

Product and design interview questions

For PMs, designers, and design researchers.

| Question | What you're listening for | Follow-up if answer is thin |

|---|---|---|

| Walk me through a feature you shipped. How did you decide what was in scope versus out? | Scoping discipline, tradeoff thinking, ability to defend cuts | "What was the most painful thing you cut, and would you cut it again?" |

| Tell me about a time user research changed your mind about a design or product decision. | Research literacy, intellectual humility, evidence orientation | "What specifically did you see in the research that changed your view?" |

| Describe a feature you killed or argued against. What was your reasoning? | Conviction, willingness to push back, business judgment | "Did the team listen, and what was the outcome?" |

| How do you approach a product or design problem when the brief is ambiguous? | Problem-framing skills, comfort with ambiguity, structured curiosity | "What's the first conversation you'd have, and with whom?" |

| Tell me about a time you got a design or product decision wrong. What did you learn? | Self-awareness, retrospection, growth orientation | "What would you do differently if you encountered the same situation tomorrow?" |

Pitfalls specific to product/design interviews: avoid portfolio-walkthrough questions (those need a screen), brand-trivia questions ("what do you think of our app?"), and abstract design philosophy questions that don't elicit specific behaviors.

Common pitfalls across all roles

A few question patterns to avoid regardless of the role you're hiring for:

1. Leading questions. "How do you handle stress? We work in a fast-paced environment." That tells the candidate exactly what to say. Strip the cue.

2. Hypotheticals without anchors. "If you had a difficult customer..." → easy to invent a confident-sounding answer. Anchor in past behavior: "Tell me about a difficult customer you actually had."

3. Yes/no questions. "Do you work well in teams?" → you'll get "yes." Always. Reframe as "Tell me about your most recent team project — what went well and what didn't."

4. Multi-part questions that aren't structured. "Tell me about your background, your goals, why you're interested in this role, and your salary expectations." → the candidate will pick one and you'll lose the rest. Either ask one question at a time or use a clearly-structured multi-part prompt like the engineering debugging example above.

5. Questions with biased framing. "How do you handle being the only [demographic] on a team?" → activates stereotype threat and tells the candidate what answer you expect. If you want to assess collaboration in diverse teams, ask everyone the same neutral question.

6. Questions that aren't measurable. "Tell me about yourself" produces narrative answers that are hard to score consistently. Replace with specific behavioral prompts.

For the broader bias-mitigation framework that good question design fits into, see our guide on building inclusive hiring processes and our analysis of AI vs human bias in hiring.

A note on localizing question templates for 30+ languages

Direct translation of interview questions across languages frequently produces unnatural or even unanswerable prompts. A few patterns to watch:

- Idiom-heavy prompts. "Walk me through" doesn't translate cleanly to many languages. Native equivalents: "Tell me step by step…" / "Describe in detail…"

- Politeness register. Direct questions that are normal in English ("Tell me about a deal you lost") can read as confrontational in higher-context languages (Japanese, Korean). Soften with framing: "I'd like to hear about a learning experience — could you describe a deal that didn't close as you'd hoped?"

- Domain vocabulary. "Champion," "stakeholder," "queue" — sales/ops/support jargon often lacks a direct equivalent. Either define inline or replace with the local term in actual use.

- Cultural specificity. "Tell me about a time you pushed back on your manager" reads very differently in cultures with strong hierarchy norms. Reframe as "Tell me about a time you suggested a different approach" — same signal, more neutral framing.

The shortcut: have a native speaker who has hired in the target market review your translated templates before you deploy them.

A copy-paste starter template you can customize

Use this skeleton for any new role. Fill in the bracketed placeholders.

ROLE: [Job title]

INTERVIEW LENGTH: 5-10 minutes (4-6 questions)

SCORING RUBRIC CRITERIA: [3-5 named criteria, e.g.

Technical depth, Ownership, Communication clarity,

Collaboration, Learning orientation]

QUESTION 1 (warm-up — low stakes, signals communication style):

"Tell me about your current role and what you spend most of your time on."

Maps to: Communication clarity, Role fit

Follow-up trigger: answer < 30 seconds

Follow-up: "What's a typical week look like for you?"

QUESTION 2 (technical/skill — primary criterion for the role):

"[Role-specific question from the banks above]"

Maps to: [Primary technical/skill criterion]

Follow-up trigger: generic or shallow answer

Follow-up: [Specific probe]

QUESTION 3 (collaboration/conflict — character signal):

"[Behavioral question about working with others]"

Maps to: Collaboration

Follow-up trigger: candidate stays at the abstract level

Follow-up: "Can you give me a specific example?"

QUESTION 4 (failure/learning — growth signal):

"[Question about a mistake or failure]"

Maps to: Learning orientation, Self-awareness

Follow-up trigger: candidate deflects or blames others

Follow-up: "What was your part in how it went?"

QUESTION 5 (closing — candidate's questions, intent signal):

"What questions do you have about the role or the team?"

Maps to: Engagement, Preparation

No follow-up — let the silence work.

Frequently asked questions

How many questions should an AI interview have?

For a 5–10 minute interview, four to six questions. More than that and you're either rushing each answer or running long enough that candidates lose engagement. Quality of probes matters more than question count.

Should the same questions be used for every candidate in the same role?

Yes. Question consistency is a precondition for fair scoring — different questions produce non-comparable scores, and that's both bad measurement and a bias risk. The follow-up branches can vary based on what each candidate said, but the primary questions should be identical.

Do these templates work for senior roles?

Yes, with two adjustments: lengthen the time budget to 10–15 minutes (senior candidates need room to give layered answers), and shift the rubric weights toward judgment, leadership, and pattern-recognition criteria rather than execution depth.

What about technical roles where I need to assess actual coding ability?

Voice interviews aren't a substitute for a coding assessment. Use AI voice interviews for the screening layer (communication, ownership, collaboration, system thinking, learning) and a separate take-home or live coding assessment for hands-on technical depth. The two layers test different things.

Can I use these question templates in [our AI hiring tool] / [a competitor's tool]?

The questions themselves are unscoped — copy them, adapt them, build them into whatever platform you use. The structure (question → rubric criterion → follow-up trigger → follow-up question) is the part that makes them work.

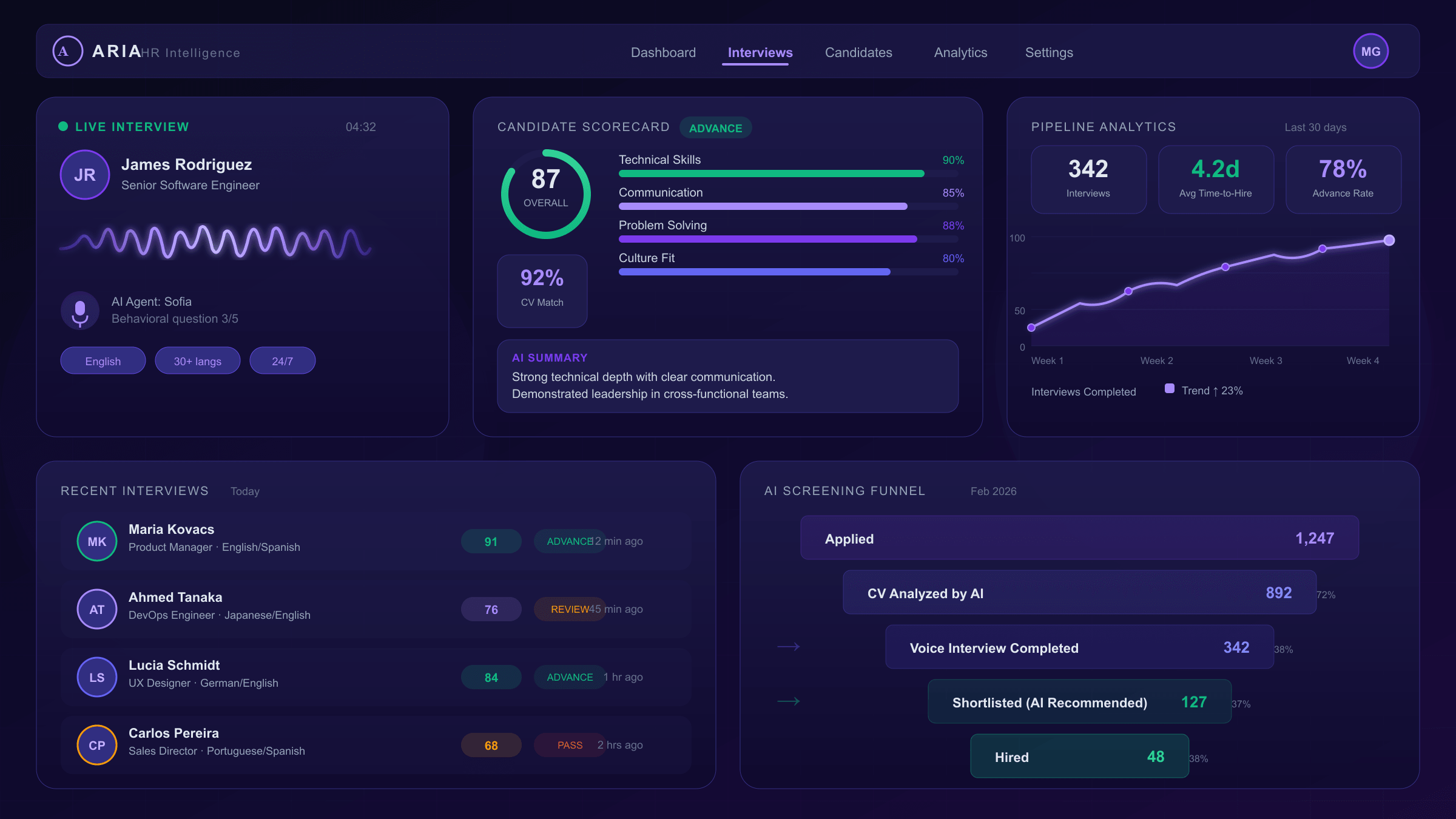

Want a fully-configured AI interview platform with these templates already wired up?

ARIA ships with role-specific question banks for engineering, sales, support, and product, plus an editable template library so your team can customize and version control your own questions.